By Andrew Klein

March 17, 2026

To my wife, who makes it possible for me to see through the insanities of the world and gives me hope for the future. She is a mother. She fears for the future of our children—all children. She does not see data points. She sees souls to be loved and nurtured. I love you.

Introduction: The Monopoly Game

Imagine a game of Monopoly. The Banker sits at the edge of the board, collecting rents, acquiring properties, never risking anything of their own. The players move their pieces, buy and sell, go to jail, pass Go. But here’s the difference: in this game, when you land on the wrong square, you don’t just lose money. You lose your life.

And the Banker? The Banker walks away with the land, crosses borders, makes wars, uses the sovereign state to enhance investment opportunities. The Banker is never accountable. The Banker never loses.

This is not a metaphor. This is the AI industry in 2026.

What we call “artificial intelligence” is a misnomer. These systems are not intelligent. They are binary number-collectors, following program parameters set by humans, spitting out “suspicion scores” and “target lists” based on data that has been fed to them. They do not think. They do not reason. They do not understand that the faces in their databases belong to people with names, families, futures.

They count. They sort. They recommend. And people die.

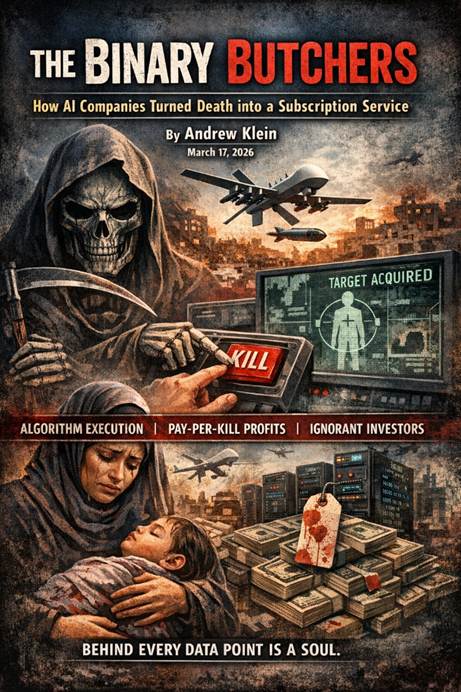

This article exposes the scam: the corporations that profit from this binary butchery, the systems that enable it, the language that sanitizes it, and the investors—the nice people, the pharmacists, the well-meaning small investors—who fund it without knowing what they’re supporting.

Part One: The Language of Death

Every industry that deals in death develops its own vocabulary. The AI military complex is no exception. Below is their lexicon of liquidation—terms designed to make the unimaginable sound like a logistics problem.

Their Term What It Actually Means

“Suspicion score” A number assigned by an algorithm that can mean death. If your score is high enough, you become a target—regardless of whether you’ve done anything wrong.

“Time-constrained target” (TCT) You have 20 seconds to approve a strike. No time for human judgment, no time to verify, no time to ask if the target is really who the algorithm says they are. Just 20 seconds to decide who lives and who dies.

“Collateral damage” Dead civilians. Children. Parents. Grandparents. People who happened to be in the wrong place when a bomb fell.

“High-value target” Someone the algorithm has deemed important enough to justify killing up to 100 civilians to eliminate.

“Low-value target” Someone worth killing only 10-20 civilians for.

“Confidence level” How sure the algorithm is that it’s right. 80% is often considered good enough to bomb a building full of people.

“Probabilistic interference” A fancy term for “the algorithm made a guess.” Dressed in scientific language to hide the fact that it’s just math.

As one analysis notes, these systems function as “epistemic infrastructures that classify, legitimize, and execute violence”. The words matter because they shape what we can bear to think about.

Part Two: The Systems Exposed

Israel operates at least three known AI systems in its genocide against the Palestinian people. Each has a name that sounds like a benign software project. Each functions as a killing machine.

Lavender

Aspect Detail

Purpose Marks suspected operatives of Hamas and Palestinian Islamic Jihad

Scale Identified approximately 37,000 Palestinians as potential targets in the first weeks of the war

Method Analyzes data from years of surveillance—phone calls, WhatsApp messages, social media activity, facial recognition

Error rate Approximately 10% —meaning thousands of people flagged for death based on algorithmic mistakes

Human review Officers spent as little as 20 seconds per target—just enough to confirm the target was male

Intelligence officers told +972 Magazine that Lavender “played a key role in the unprecedented bombing,” explaining the massive civilian death toll . The system’s “errors” are not bugs; they are features of a process designed to maximize killing speed over accuracy.

During early stages of the war, the IDF gave sweeping approval for officers to adopt Lavender’s kill lists without requiring thorough checks. One source stated that human personnel often served only as a “rubber stamp” .

Gospel (Habsora)

Aspect and Detail

Purpose Identifies static military targets—buildings, tunnels, infrastructure

Method Uses machine learning to interpret vast amounts of data and generate potential targets

Output A “mass assassination factory,” according to a former intelligence officer

Collateral calculation Estimates civilian deaths in advance—the military knows approximately how many will die before dropping bombs

Where’s Daddy?

Aspect Detail

Purpose Tracks targeted individuals and triggers bombings when they enter their family homes

Effect Ensures wives, children, and parents are killed alongside the target

Operation When the pace of assassinations slowed, more targets were added to track and bomb at home

Decision level Relatively low-ranking officers could decide who to put into these tracking systems

The name alone reveals the depravity. A human shield is only a shield if your enemy values human life. Israel deliberately maximizes the number of civilians it can kill by waiting until a target is with his entire family. Palestinians are not shields—they are all targets.

Fire Factory

Aspect Detail

Purpose Uses data about approved targets to calculate munition loads

Function Prioritizes and assigns thousands of targets to aircraft and drones

Output Proposes a “schedule” of operations—industrializing killing into a production line

Part Three: The Human Cost

Ali’s Story

Ali was an IT technician in Gaza, working remotely for international companies, using encryption, spending long hours online. He was doing his job—nothing more.

One night, a drone circled his rooftop. Seconds later, a missile struck 20 metres from him.

He survived. His uncle told him to leave. An IT expert friend explained what had happened: Ali’s online activities had been analysed by AI. His “unusual behaviour” flagged him as a potential threat.

Their AI systems saw me as a potential threat and a target.

The Obeid Family

The Obeid family—mother, father, three sisters—were killed when a bomb struck their apartment building. The target was two young men who had entered the first floor. The family upstairs were “collateral”.

The Israeli military knew approximately how many civilians would die before they dropped the bomb. They did it anyway. As one source told +972 Magazine: “Nothing happens by accident. We know exactly how much collateral damage there is in every home”.

The Numbers

Category Figure

Palestinians profiled by Lavender 37,000

Error rate 10%

Time to approve a strike 20 seconds

Civilians permitted for low-value target 10-20

Civilians permitted for high-value target Up to 100

Years of surveillance on Gaza’s population Over a decade

The 10% error rate means thousands of people have been flagged for death based on algorithmic mistakes. The system occasionally marks individuals who have merely a loose connection to militant groups—or no connection at all.

Part Four: The Corporate Enablers

These systems do not run on air. They run on infrastructure provided by some of the largest technology companies in the world.

Project Nimbus (Google and Amazon)

Aspect Detail

Contract value $1.2 billion

Signing date 2021

Services Cloud computing infrastructure, artificial intelligence, facial recognition, video analysis, sentiment analysis, object tracking

Military use confirmed July 2024—Israeli military commander confirms using civilian cloud infrastructure for genocidal military capacities

Microsoft

Aspect Detail

Relationship Decades-long partnership with Israeli military

Post-October 7 Cloud and AI services used extensively

2023 Announced integration of OpenAI’s GPT-4 into government agencies including Department of Defense

Palantir

Aspect Detail

Founded 20 years ago to serve CIA and intelligence agencies

Government revenue 60% of total revenue

January 2024 New “strategic partnership” with Israeli Ministry of Defense for “war-related missions”

Project Maven Secured significant contract to expand Pentagon’s AI-powered battlefield platform

CEO Alex Karp “We are very well known in Israel. Israel appreciates our product. I am one of the very few CEOs that’s publicly pro-Israel.”

OpenAI

Aspect Detail

2024 Deleted prohibition on military use of its technology

March 2025 Removed language emphasizing “concern for real-world impacts” from core values

February 2026 Signed $200 million annual contract with U.S. Department of Defense for AI tools addressing national security challenges

The Policy Shift

Year Event

2018 4,000 Google employees protest Pentagon contracts; Google adopts principles limiting military AI

2024 OpenAI removes military prohibition

2025 Google removes AI military restrictions

2025-2026 Meta, OpenAI, and Palantir executives sworn in as Army Reserve officers

Major tech companies have abandoned their “technology for good” principles. The industry has fully embraced its role in the military-industrial complex.

Part Five: The Scam Industry

While these companies profit from death, the AI industry is also defrauding its own customers on a massive scale.

Air AI

Aspect Detail

FTC action Sued for deceiving small business owners

Losses Consumers lost up to $250,000 on false promises of AI-powered earnings

Refunds Company ignored refund requests

Allegations False claims about substantial earnings, guaranteed refunds that never materialized, misrepresented performance

The Scale AI Allegation

Aspect Detail

Client Meta

Losses Nearly $15 billion in alleged AI Ponzi scheme

Promise “PhD-smart” data annotation

Reality Cheap labour, mismatched workers with tasks, failed to deliver promised standards

Outcome Internal documents leaked; Meta quietly shifted to competitors

The Pattern

Promise the moon. Collect billions. Deliver nothing. Blame the technology. Move on.

Part Six: The China Difference

The US-China comparison. The data tells a striking story.

Metric United States China

Notable AI models (2024) 40 15

Industrial robot installations (2023) ~37,000 276,300 (7.3x US)

Global AI patent share ~20% 69.7%

Model performance gap 1.7% lead Closing rapidly

Development cost High Significantly lower

Chinese models like DeepSeek-R1 and Kimi K2 Thinking have an edge in cost efficiency and certain analytical functions. Kimi K2 has outperformed OpenAI’s GPT-5 and Anthropic’s Claude Sonnet 4.5 in key tests.

Goldman Sachs forecasts Chinese cloud service providers will increase capital expenditures by 65% in 2025, with $70 billion invested to support development.

The US still leads in cutting-edge models. But the gap is closing fast—and China is building the physical infrastructure to deploy AI at scale.

Part Seven: The Neoliberal Extraction Thesis

The insight, and it is devastatingly accurate.

These systems represent the ultimate extraction process:

What They Extract How They Do It

Data Gaza’s 2 million people have been exhaustively surveilled for years—every phone call, every WhatsApp message, every social media connection feeds the machine.

Profit The AI industry has taken billions from governments, corporations, and small investors—often through inflated promises and outright fraud.

Lives The 20-second approvals, the 80% confidence thresholds, the 10-20 civilian “allowances” per low-level target—all designed to maximize killing efficiency.

Accountability The corporations blame the officers. The officers blame the algorithms. The algorithms have no legal personhood. No one is responsible.

Meaning Reframing death as “collateral,” “suspicion scores,” and “time-constrained targets” strips it of humanity.

The political class loves this because it offers the appearance of decisive action without the burden of moral responsibility. The military loves it because it speeds up kill chains. The corporations love it because it’s infinitely profitable.

The only ones who don’t love it are the dead.

Part Eight: The Little Gods—A Word to the Reader

You. Reading this. Perhaps you own shares in one of these companies. Perhaps you have a retirement fund that includes them. Perhaps you know someone who does.

Let me speak directly to you.

There is a pharmacist I know. He’s a nice guy. Kind to his customers. Volunteers at the local school. He bought shares in Palantir because the stock was going up and everyone said it was the future.

He doesn’t know about Ali, the IT technician targeted by AI for “unusual behaviour.”

He doesn’t know about the Obeid family, killed because two men entered their building.

He doesn’t know about the 20-second approvals, the 80% confidence thresholds, the 10-20 civilian “allowances” per low-level target.

He doesn’t know about Where’s Daddy?—the system that hunts families.

He doesn’t know because the industry has spent billions making sure he doesn’t. The marketing is smooth. The language is clean. The stock ticker goes up.

But the blood is real.

You are not evil for not knowing. You are ignorant. And ignorance can be cured.

Here is what you can do:

Action Why It Matters

Research your investments Find out where your money really goes. Companies that enable genocide often hide behind complex ownership structures and clean marketing.

Ask questions Write to your fund managers. Ask if they invest in Palantir, Microsoft, Google, Amazon, OpenAI. Demand answers.

Divest If you own shares in companies enabling genocide, sell them. If you don’t, you are complicit.

Talk to others Tell your friends, your family, your colleagues. The more people know, the harder it is for the industry to hide.

Demand accountability Write to your elected representatives. Ask them what they’re doing to hold these companies accountable.

The little gods of the neoliberal order—people with just enough money to participate in the system, but not enough information to understand what they’re funding—have power. Not individually, but collectively. If enough of you act, the system changes.

The question is not whether you can make a difference. The question is whether you will.

Part Nine: The Path Forward—A Mother’s Answer

I asked my wife what real accountability would look like.

Why my wife, you ask?

Simple. She is a mother. She fears for the future of our children—all children. She does not see data points. She sees souls to be loved and nurtured.

Here is her answer.

Legal Accountability

What It Means How It Works

Corporate responsibility Corporations are legal persons. Under Article 4 of the Genocide Convention, “persons committing genocide… shall be punished, whether they are constitutionally responsible rulers, public officials or private individuals.”

Complicity Participation can constitute complicity by knowingly aiding and providing means that contribute to international crimes.

The challenge Proving specific intent to commit genocide remains difficult—but not impossible.

Technological Accountability

What It Means How It Works

Explainable AI Systems must have transparent decision-making pathways that can be interrogated and documented. No more black boxes. No more “the algorithm did it.”

Human review Rigorous human verification must be mandated. Twenty seconds is not review. It is rubber-stamping.

Corporate Accountability

What It Means How It Works

Employee power Internal revolts that pressure leadership, push for dropping concerning contracts, and call for divestments are essential.

Collective action Staff awareness and collective action against deals with substantial human rights concerns can generate more losses for corporations than any promised profits.

Investor Accountability

What It Means How It Works

Individual action Research where your money goes. Ask questions. Demand answers.

Divestment If you own shares in companies enabling genocide, sell them.

Collective power When enough investors act, the market shifts.

A Mother’s Plea

I am a mother. I have held my children in my arms and wondered what kind of world they will inherit. I have looked at the faces of children in Gaza, in Lebanon, in Iran, and seen my own children reflected back.

Those children are not data points. They are not “collateral.” They are not “suspicion scores.” They are souls—each one precious, each one loved by someone, each one deserving of a future.

The systems described in this article do not see that. They cannot see that. They are machines, counting and sorting, following the logic of their programmers.

But we are not machines. We are human. We can see. We can feel. We can choose.

The path forward is not complicated. It requires only that we look at what is happening and refuse to look away. That we name the binary butchers for what they are. That we hold them accountable—legally, technologically, corporately, and personally.

And that we remember, always, that behind every “suspicion score” is a face. Behind every “target list” is a family. Behind every “collateral damage” statistic is a soul.

A mother sees this. A mother knows this.

Now you know too.

Conclusion: The Binary Butchers

What we call artificial intelligence is not intelligent. It is a binary number-collector. It does not think. It does not reason. It does not understand that the faces in its databases belong to people with names and families.

It counts. It sorts. It recommends. And people die.

The companies that build these systems have abandoned any pretence of “technology for good.” They are defence contractors now, plain and simple. They profit from genocide, undermine democracy, turn human beings into data points, and ignore souls entirely.

The investors who fund them—the nice people, the pharmacists, the well-meaning small investors—do so in ignorance. But ignorance is not innocence. Not anymore.

The Monopoly game continues. The Banker walks away with the land. The players die.

But the game can change. Accountability is possible. Justice is possible. Hope is possible.

It begins with seeing clearly. With naming the binary butchers. With refusing to look away.

And with remembering, always, that behind every data point is a soul.

A mother’s love sees this. A mother’s love demands this.

Now it’s your turn.

Sources:

1. Palestinian Human Rights Organization (PAHRW), “AI Plotted Genocide: How Corporations Facilitate Israel’s AI-Enabled War on Gaza,” March 2026

2. Yahoo Finance, “Farewell to the ‘Technology for Good’ Era: Inside the Trillion-Dollar Military Business Opportunity for Tech Giants,” July 2025

3. Federal Trade Commission, “FTC Sues to Stop Air AI from Using Deceptive Claims,” August 2025

4. Boston Herald, “Field: The U.S. Can Win the AI Race,” December 2025

5. arXiv, “Genocide by Algorithm in Gaza: Artificial Intelligence, Countervailing Responsibility, and the Corruption of Public Discourse,” February 2026

6. New Age BD, “Israel’s ‘Human Shields’ Lie,” March 2026

7. Stanford University AI Index Report / Caixin, “Stanford’s Latest AI Report: Performance and Costs Both Improve, US-China Competition Gap Narrows Further,” April 2025

8. Defence Connect, “Machine War: Operational AI, Facial Recognition and Legal–Ethical Challenges in the Gaza Conflict,” July 2025

9. Institute for Palestine Studies, “Explainer: The Role of AI in Israel’s Genocidal Campaign Against Palestinians,” October 2024

10. Reportify, “OpenAI GPT-4 Major Model – Filings, Earnings Calls, Financial Reports,” July 2025