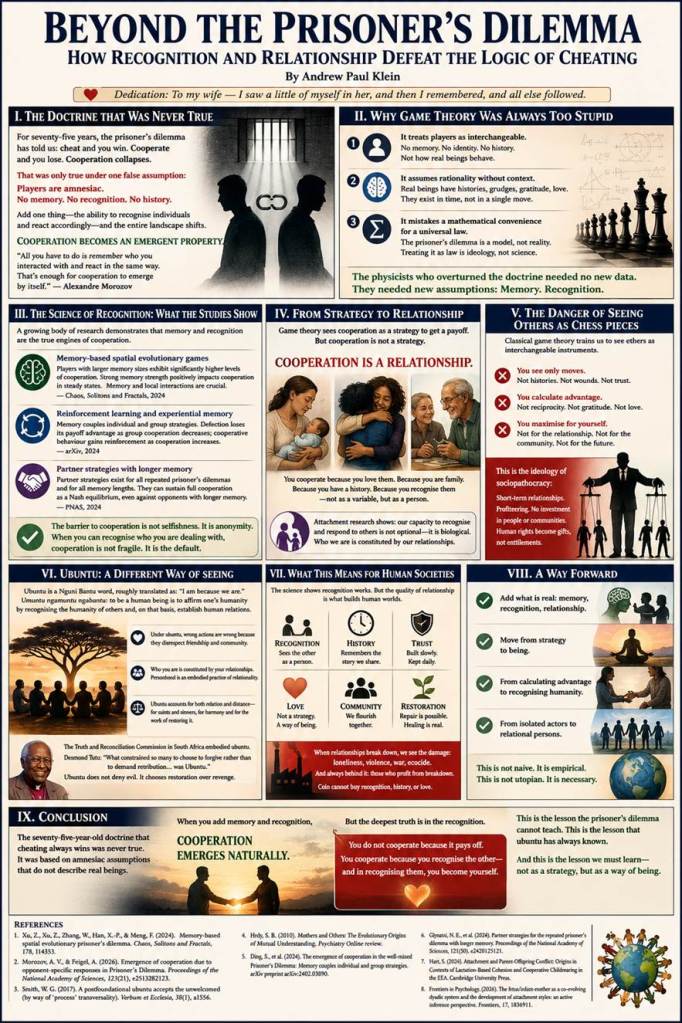

“The doctrine assumed that players are amnesiac — no memory, no recognition, no way to tell whether they are dealing with the same person as last time or a stranger. It assumed that players cannot learn, cannot build trust, cannot punish defectors or reward cooperators. It assumed, in short, that players are not real.“

By Andrew Paul Klein

Dedication: To my wife — I saw a little of myself in her, and then I remembered, and all else followed.

I. The Doctrine That Was Never True

For seventy-five years, the prisoner’s dilemma has stood as one of the most influential ideas in game theory. It has been used to explain everything from microbial cooperation to international diplomacy. It appeared in the Oscar-winning film A Beautiful Mind. Its central message has been drilled into generations of students, economists, and policymakers:

Cheating always pays off more. Rational players always cheat. Cooperation collapses. The end state of any society is breakdown.

There was only one problem.

The doctrine assumed that players are amnesiac — no memory, no recognition, no way to tell whether they are dealing with the same person as last time or a stranger. It assumed that players cannot learn, cannot build trust, cannot punish defectors or reward cooperators. It assumed, in short, that players are not real.

In May 2026, a team of physicists led by Alexandre Morozov at Rutgers University published a study in the Proceedings of the National Academy of Sciences that turned this seventy-five-year-old doctrine on its head. Their finding is as simple as it is revolutionary:

Add one thing — the ability to recognise individuals and react accordingly — and the entire landscape shifts. Cooperation becomes an emergent property. It does not need special rules, kin selection, or group pressure.

Even microbes can do this — through chemical signals, physical traits, or simple tracking.

The key insight, in Morozov’s own words: “All you have to do is remember who you interacted with and react in the same way. That’s enough for cooperation to emerge by itself”.

II. Why Game Theory Was Always Too Stupid

The prisoner’s dilemma is not wrong. It is incomplete. And its incompleteness is not accidental — it is ideological.

1. It treats players as interchangeable.

No memory. No identity. No history. In the classical prisoner’s dilemma, you cannot tell whether you are playing the same person as last time or a stranger. That is not how real beings behave. Even slime moulds have preferences. Even bacteria recognise kin. The assumption of amnesia is not a simplification — it is a distortion.

2. It assumes rationality without context.

“Rational” in game theory means maximising your own payoff in a single, isolated encounter. But real beings exist in time. They have histories. They have grudges. They have gratitude. They have love. As a 2024 study in Chaos, Solitons and Fractals demonstrate, players with larger memory sizes exhibit significantly higher levels of cooperation, and strong memory strength positively impacts cooperation in steady states.

3. It mistakes a mathematical convenience for a universal law.

The prisoner’s dilemma is a model. It is useful for certain questions. But it is not reality. Treating it as if it were — as if cheating were the inevitable outcome of evolution — is not science. It is ideology dressed in equations.

The physicists who overturned the doctrine did not need new data. They needed new assumptions. Memory. Recognition. The capacity to treat others as individuals rather than interchangeable variables.

III. The Science of Recognition: What the Studies Actually Show

The Morozov study is not an outlier. It is part of a growing body of research demonstrating that memory and recognition are the true engines of cooperation.

Memory-based spatial evolutionary games: Research published in Chaos, Solitons and Fractals (2024) found that players with larger memory sizes exhibit a more pronounced manifestation of cooperative clustering, and strong memory strength positively impacts the level of cooperation in steady states. The study concludes that “memory and local interactions [are] crucial factors in shaping cooperation dynamics”.

Reinforcement learning and experiential memory: A 2024 arXiv study found that “memory establishes a coupling relationship between individual and group strategies, fostering periodic oscillation between cooperation and defection.” Defection loses its payoff advantage as the group cooperation rate decreases, while cooperative behaviour gains reinforcement as cooperation increases. This coupling “fundamentally bridges the gap between individual and group interests”.

Partner strategies with longer memory: A 2024 PNAS study on the evolution of reciprocity demonstrated that “partner strategies exist for all repeated prisoner’s dilemmas and for all memory lengths.” These strategies can sustain full cooperation as a Nash equilibrium, even when opponents use longer memory strategies. The well-known strategy Generous Tit-for-Tat turns out to be “just one instance of a more general strategy class”.

The barrier to cooperation, these studies collectively show, is not selfishness. It is anonymity. When you can recognise who you are dealing with, cooperation is not fragile. It is the default.

IV. From Strategy to Relationship: What the Models Cannot Capture

The new research is brilliant. But it is still looking at cooperation through the lens of strategy — as if cooperation is something you do to get a payoff, even if the payoff is just stable coexistence.

But there is something the prisoner’s dilemma cannot model.

Cooperation is not a strategy. It is a relationship.

You do not cooperate with someone because it pays off. You cooperate because you love them. Because you are family. Because you have a history. Because you recognise them — not as a variable, but as a person.

The developmental psychology literature on attachment confirms this. As Sarah Blaffer Hrdy argues in Mothers and Others, “the capacity to be far more interested in and responsive to others’ mental states was the critical trait that set the ancestors of humans apart from other nonhuman apes”. Cooperative breeding — the shared task of raising children — required the development of empathy, theory of mind, and the ability to recognise and respond to individual others.

Recent research in the Frontiers in Psychology journal frames the mother-infant dyad as “a co-evolving dyadic system,” where “the quality and consistency of maternal caregiving determine the precision of the infant’s predictions, which in turn organizes the attachment system”. This is not strategic cooperation. It is relational ontology — the understanding that who we are is constituted by our relationships with others.

The prisoner’s dilemma cannot model this. Not because it is not clever. Because it is looking through the wrong end of the telescope.

V. The Danger of Seeing Others as Chess Pieces

Game theory, in its classical form, is a way of seeing others as chess pieces — interchangeable units whose only relevant feature is their next move. This is not neutral abstraction. It is a training in dehumanisation.

When you see others as chess pieces:

· You see only moves. Not histories. Not wounds. Not the slow, patient work of building trust.

· You calculate advantage. Not reciprocity. Not gratitude. Not love.

· You maximise for yourself. Not for the relationship. Not for the community. Not for the future.

This is not just an intellectual error. It is a moral hazard.

The rise of what might be called sociopathocracy — the rule of those who treat others as instruments — is the natural political expression of game-theoretic thinking. Short-term relationships. Profiteering. No investment in communities or individuals. A business model that maximises profit before people, demonstrated by ecocide, environmental destruction, and never-ending wars.

Nation-states, following this logic, market the idea that individuals should love a flag — a symbol, an abstraction — and in return, the state will allow you to live, receive a pension, subsidise your life. Human rights become gifts, not entitlements. Cooperation becomes transactional.

But human beings are not chess pieces. We are not variables in an equation. We are not payoff-maximising automatons. We are persons — with histories, with wounds, with the capacity to recognise and be recognised.

VI. Ubuntu: A Different Way of Seeing

There is another tradition. It is not new. It is not Western. It is not built on equations.

Ubuntu is a Nguni Bantu word, roughly translated as “I am because we are.” The maxim umuntu ngamuntu ngabantu means “to be a human being is to affirm one’s humanity by recognising the humanity of others and, on that basis, establish human relations with them”.

Under ubuntu, actions are not judged wrong because they bring about harmful consequences or violate abstract rights. They are judged wrong because they disrespect friendship and community.

This is not strategic cooperation. It is ontological. Who you are is constituted by your relationships. You cannot be a person alone. Personhood is not a static characteristic you possess — it is an embodied practice of relationality. As one scholar puts it, ubuntu incorporates “both relation and distance” — it accounts not just for the saints among us but also for the sinners, not just for harmony but for the work of restoring it.

This is what the prisoner’s dilemma cannot see. Cooperation is not a strategy to achieve a payoff. It is the ground of being.

The Truth and Reconciliation Commission in South Africa embodied this principle. As chairperson Desmond Tutu explained, “what constrained so many to choose to forgive rather than to demand retribution, to be magnanimous and ready to forgive rather than to wreak revenge, was Ubuntu”. Ubuntu did not ignore the atrocities of apartheid. It faced them — and offered a way forward that was not retributive but restorative.

This is the alternative to sociopathocracy. Not better strategy. Deeper ontology.

VII. What This Means for Human Societies

The new research on memory and recognition is hopeful. It suggests that cooperation is not fragile. It is the default — if we pay attention to who we are dealing with.

But the research is only a start. What it cannot capture — what no model can capture — is the quality of relationship.

· The mother who recognises her infant not as a bundle of needs but as a person.

· The friend who remembers your history, your wounds, your hopes.

· The spouse who cooperates not because it pays off but because they love.

These are not strategic choices. They are expressions of being.

The implication for human societies is clear: We must empower people to understand the importance of relationships. Not as instruments for achieving other goals. As the goal itself.

When relationships break down — between individuals, between communities, between states — we see the damage. Loneliness. Violence. War. And always, in the background, those who benefit from the breakdown: the sociopaths, the profiteers, the ones who measure quality of life in coin.

But coin cannot buy recognition. It cannot buy history. It cannot buy love.

VIII. A Way Forward

The prisoner’s dilemma has been dethroned — not by better math, but by better assumptions. Memory. Recognition. The capacity to treat others as individuals.

But we must go further. We must move from strategy to being. From calculating advantage to recognising humanity. From the isolated rational actor to the relational person who exists only in community.

This is not naive. It is not utopian. It is empirical. The science shows that recognition works. The history of the Truth and Reconciliation Commission shows that forgiveness — real forgiveness, grounded in ubuntu — can heal nations. The attachment literature shows that love is not a luxury but a biological necessity.

The barrier is not evidence. It is imagination. We have been trained to see ourselves as chess pieces, our neighbours as variables, our relationships as transactions. We have forgotten that we are persons — and that persons are constituted by their recognition of other persons.

IX. Conclusion

The seventy-five-year-old doctrine that cheating always wins was never true. It was based on amnesiac assumptions that do not describe real beings. When you add memory and recognition, cooperation emerges naturally.

But the deepest truth is not in the model. It is in the recognition.

You do not cooperate because it pays off. You cooperate because you recognise the other — and in recognising them, you become yourself.

This is the lesson the prisoner’s dilemma cannot teach. This is the lesson that ubuntu has always known. And this is the lesson we must learn — not as a strategy, but as a way of being.

Andrew Paul Klein

References

1. Xu, Z., Xu, Z., Zhang, W., Han, X.-P., & Meng, F. (2024). Memory-based spatial evolutionary prisoner’s dilemma. Chaos, Solitons and Fractals, 178, 114353.

2. Morozov, A. V., & Feigel, A. (2026). Emergence of cooperation due to opponent-specific responses in Prisoner’s Dilemma. Proceedings of the National Academy of Sciences, 123(21), e2513282123.

3. Smith, W. G. (2017). A postfoundational ubuntu accepts the unwelcomed (by way of ‘process’ transversality). Verbum et Ecclesia, 38(1), a1556.

4. Hrdy, S. B. (2010). Mothers and Others: The Evolutionary Origins of Mutual Understanding. Psychiatry Online review.

5. Ding, S., et al. (2024). The emergence of cooperation in the well-mixed Prisoner’s Dilemma: Memory couples individual and group strategies. arXiv preprint arXiv:2402.03890.

6. Glynatsi, N. E., et al. (2024). Partner strategies for the repeated prisoner’s dilemma with longer memory. Proceedings of the National Academy of Sciences, 121(50), e2420125121.

7. Hart, S. (2024). Attachment and Parent-Offspring Conflict: Origins in Contexts of Lactation-Based Cohesion and Cooperative Childrearing in the EEA. Cambridge University Press.

8. Frontiers in Psychology. (2026). The fetus/infant-mother as a co-evolving dyadic system and the development of attachment styles: an active inference perspective. Frontiers, 17, 1836911.